Software Development Life Cycle

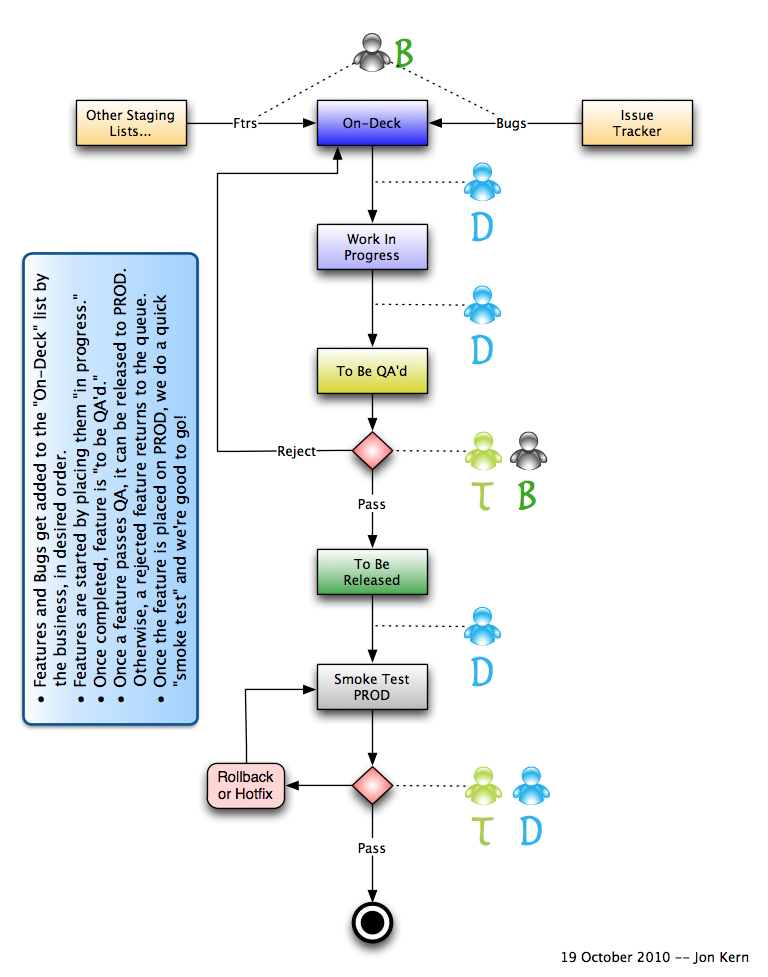

In software development, we want to make our process as visible as possible to all participants and stakeholders. To that end, behold a simple process that uses a handful of Basecamp To-Do Lists as markers for how a feature or bug is working its way through this process.

At any point in time, you can look at the “To-Do” list page for a status on your favorite new feature, task, or bug. And, if you have a chunk of features piled up in an “Iteration 1” list, you can see the number of items being worked through to completion. (There are even third-party apps that allow you to do burndown charts if they appeal to you.)

Requirements/Bugs

The business has a desired priority for features as expressed by the order of items in the “staging” lists, and especially the On-Deck list (to borrow a baseball metaphor for “What’s next”). For major new features, the business and the developers will work out a rough Release Plan. But for simple enhancements and bugs, the business can simply add desired items to the lists.

Development

The Developer will pick a new task off of the top of the On-Deck list. There will usually be a conversation around the issue to ensure proper understanding (unless it is self-explanatory). Ideally, we get the business/testers helping to write User Acceptance Tests in the BDD form of Given… When… Then… The Developer moves this feature into the “In Progress” list. If desired/possible, the user could add a date estimate for the task.

The Developer will create tests, be they Cucumber, RSpec, or UnitTest, or all 3 types. If possible, the Testers can help write the up-front acceptance tests, so that they are intimate with the feature/bug. The Developer writes code until the tests pass.

Once the issue is complete, and the tests are passing (and you didn’t break any other tests), the Developer: commits the code, updates the TEST server, and does a smoke test to ensure things still work as expected. The Developer then moves the task to the “To Be QA’d” list, where the Testers will now verify the issue is indeed complete and correct on TEST. Close collaboration between Tester and Developer is warranted here, as the nature of the fix and any developer tests can be explained so that testing can be as effective as possible. Plus we want to maximize use of automated tests, and minimize any unnecessary manual tests (as they are time consuming and expensive) given the nature of the code changes.

Testing

If the QA passes, the Tester moves the task to the “To Be Released” list. Depending on the nature of the feature, the Business can also help to “test” and ensure things work as expected. If the test fails, then the task is moved back to the On-Deck list and the details added to the issue as comments (if simple) and image uploads (add image link to the comments), or to the issue tracker if it is more complex (even though it is technically not an issue against the released product).

Release

The Developer will schedule the move for “To Be Released” tasks to the PRODuction box. This may require coordination with the client’s IT team if they have internal controls. Following release, the application is smoke tested to be sure the basics are still functioning. If any problems arise, the server can be rolled back, or fixed ASAP, based on the nature of the error.

Once the items are released to PROD, you can indicate that they are “complete.” If the issues came from a list that you want to keep for posterity, simply drag the completed task to the original list (“Iteration 1” for example). Now you can see the items in that list that have been released (i.e., completed).

Summary

I’ve used Jira and much more elaborate SDLC schemes in the past. But a recent Rails project with just a few folks on the team, found us trying out Basecamp. By creating various lists, we can mimic the Kanban-style wall board that Greenhooper provides within Jira. Because the lists allow simple ordering to show priority, and you can drag-and-drop between lists, and you can track your time, I think we have a very lightweight, winning combination.

This process is equally at home doing one feature all the way through, or an entire iteration’s worth of features in a bigger chunk. (Basically dependent on the cost of testing and the appetite of the end-user for changes.)